Information

Aug 4, 2023

Considering Fairness in Medical AI: Review and Recommendations Developed with Domestic Stakeholders

This paper organizes the risks of bias and discrimination in AI that is increasingly spreading in medical settings and summarizes measures to bring fair benefits to each patient.

Paper

Fairness of artificial intelligence in healthcare: review and recommendations

Japanese Journal of Radiology

https://doi.org/10.1007/s11604-023-01474-3

Author's Comments

This paper was completed by bringing together the collective efforts of stakeholders involved in medical AI from across Japan, including the participation of Taichi Kakinuma, a renowned lawyer in the AI field, as the second author. Now that AI is actually being used in medical settings, we felt it necessary to directly discuss fairness and bias, and we have been exchanging opinions with stakeholders from the early stages of writing. Furthermore, by repeatedly refining the paper while using large language models (LLMs), I believe we were able to incorporate more multifaceted and objective perspectives. Perhaps because this work attracted significant attention, citations increased rapidly after publication, with more than 150 citations in 2024 alone (according to Google Scholar). I feel a great responsibility and sense of fulfillment knowing that our work is of interest to so many people.

Paper Overview

This article is an open-access review paper published in the Japanese Journal of Radiology. This work takes into consideration both theoretical and practical perspectives on the impact of biases and unfair results in medical practice, including how AI-supported diagnosis might affect patients' health and treatment opportunities. The paper emphasizes the importance of preparing diverse datasets and collaboration among healthcare professionals, AI developers, government bodies, and patient groups, and provides recommendations for implementing AI under appropriate ethical and legal frameworks. As radiology is an area where AI implementation is particularly advanced, we revisited how urgent it is to address fairness based on domestic research findings.

Paper Details

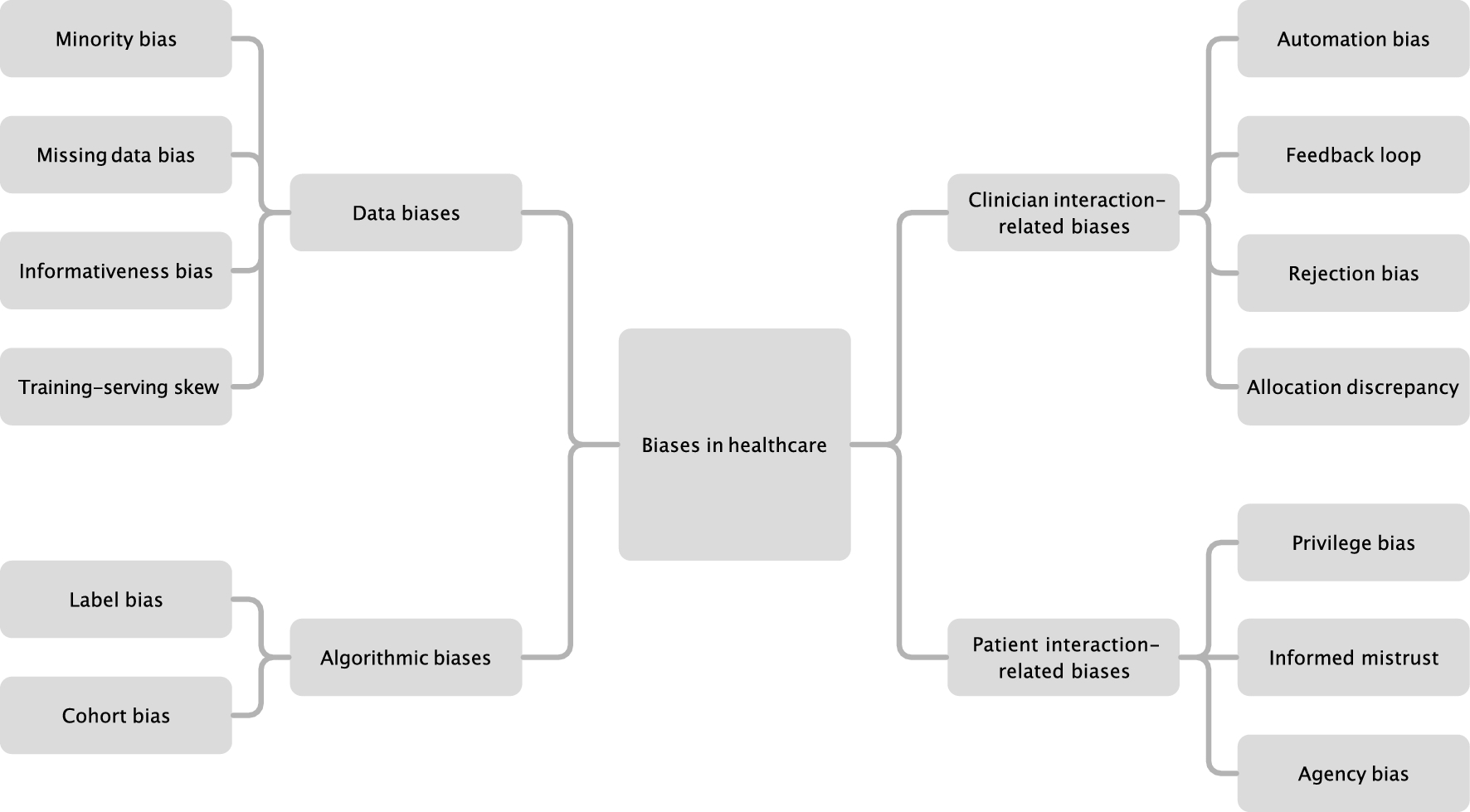

First, we defined fairness as the state where everyone can receive appropriate medical care regardless of race, social status, gender, or age. From there, we pointed out the danger of differences caused by data bias or structural problems in the algorithms themselves being overlooked in practice. For example, we showed from multiple reports (internal evaluations and external validations) that when an AI diagnostic model for chest X-rays learns predominantly from cases of a specific population, the risk of increased errors in other populations arises. To prevent this, we propose diversifying data collection during the development stage and having independent organizations regularly conduct algorithmic audits after clinical implementation. Additionally, to properly understand and address bias, not only healthcare professionals but also patients need to acquire basic knowledge about AI technology. Therefore, we also recommended establishing educational programs, emphasizing the creation of an environment that supports patient choices. Through this series of initiatives, we believe that our current responsibility is to build a system where medical AI can contribute to improved patient outcomes without bias towards anyone.