Information

Mar 22, 2025

Large-Scale Meta-Analysis Comparing Diagnostic Accuracy of Generative AI and Physicians

We broadly surveyed and compiled data on the diagnostic capabilities of generative AI, finding that while it does not match specialists, it achieves results comparable to non-specialist physicians.

Paper

A systematic review and meta-analysis of diagnostic performance comparison between generative AI and physicians

npj Digital Medicine

https://doi.org/10.1038/s41746-025-01543-z

Author's Comments

We began our research on verifying the diagnostic capabilities of generative AI in clinical settings with "Diagnostic Performance of ChatGPT from Patient History and Imaging Findings on the Diagnosis Please Quizzes" published in the journal Radiology. Subsequently, we published seven original papers in the field of radiology and one in the broader medical field, with this publication in npj Digital Medicine serving as a culmination of those achievements. I am very pleased that we were able to design the overall research plan from a long-term perspective and ultimately complete this series of studies.

Paper Overview

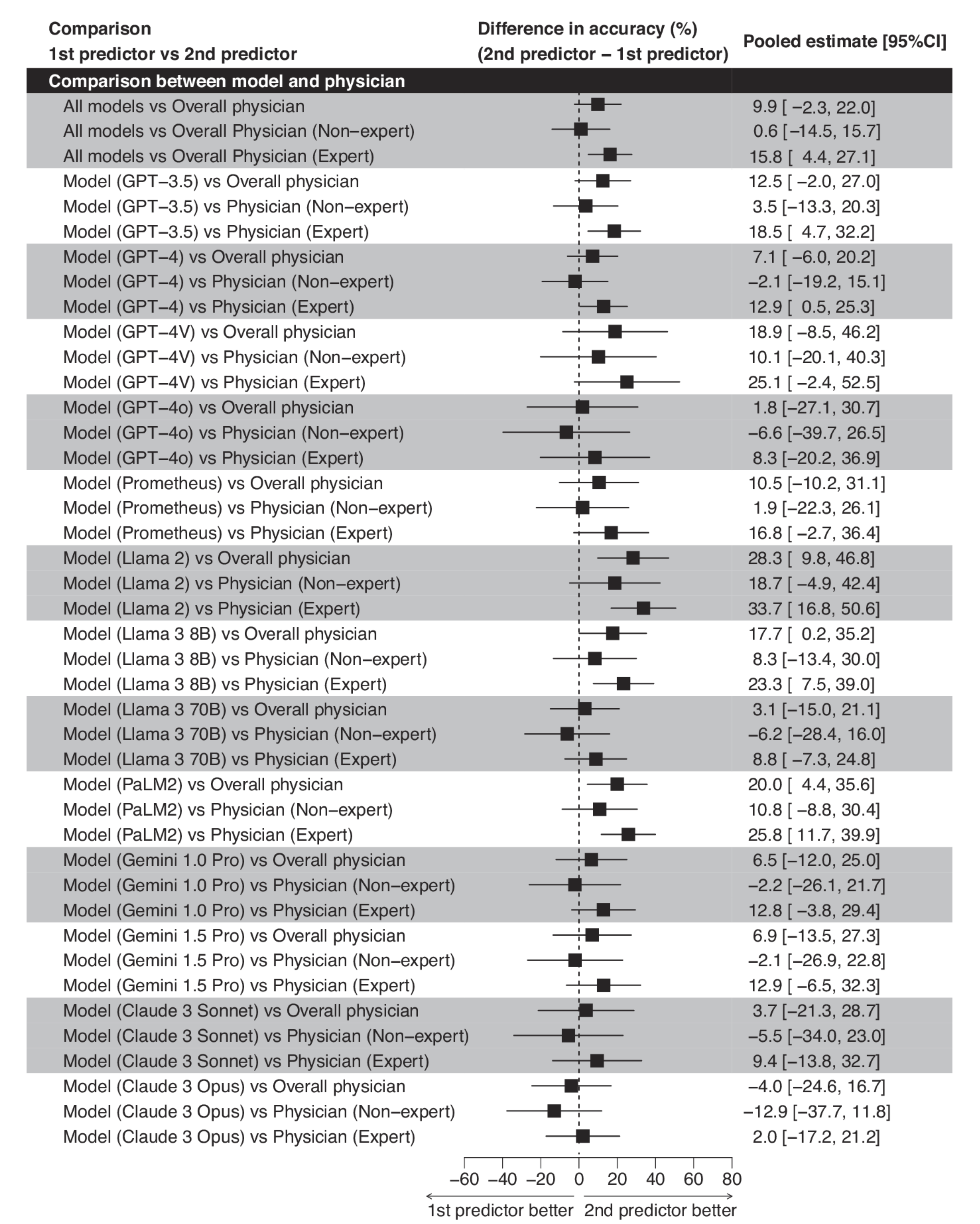

This open-access paper was published in npj Digital Medicine on March 22, 2025. It systematically analyzed 83 studies published between June 2018 and June 2024 to verify the diagnostic accuracy of various generative AI models. As a result, the overall accuracy rate of generative AI was 52.1%. It was impressive that some of the latest generative AI models showed performance that was not significantly different from—and sometimes even higher than—that of non-specialist physicians.

Paper Details

We extracted a total of 18,371 publications from multiple databases and, after excluding duplicates and those not meeting our criteria, conducted a meta-analysis on 83 studies. When comparing the diagnostic capabilities of various generative AI models, the difference from non-specialist physicians was only about 0.6% (p=0.93), suggesting that generative AI could potentially complement medical practice within certain parameters. Furthermore, some of the latest models (GPT-4, GPT-4o, Llama3 70B, Gemini 1.0 Pro, Gemini 1.5 Pro, Claude 3 Sonnet, Claude 3 Opus, etc.) showed slightly higher performance than non-specialists, though the difference was not statistically significant. However, differences still remain when compared with specialists, and the limitations of generative AI become evident particularly in scenarios requiring advanced clinical knowledge. Additionally, there were no substantial differences in accuracy across medical specialties, with no field showing particularly outstanding results. This study also pointed out that many of the target papers carry bias risks, particularly regarding the transparency of training data and thus the difficulty of external validation. These remain future challenges. While we believe that generative AI has not yet reached the level of specialist physicians, there is ample room for it as an educational aid and as diagnostic assistance, and we plan to proceed with accuracy verification in more practical environments in the future.